New Meta features

Experiments on in-ad set creative testing, and AB results from flexible ad units

We tested two new Meta features across five clients. Here’s what happened

Meta is shipping fast at the moment. When I initially wrote this, the latest ability to push spend to specific ads hadn’t been shipped. As I rewrite this, yet another new feature is launching. This is some of the most useful and fast-paced product development in Ads Manager we’ve seen yet.

Late last year, Meta started testing a ‘creative testing tool’ where you can launch five ads inside an ad set without disrupting learning. Five ads for most accounts is limited, but when in conjunction with the creative testing tool, it’s potentially a way to run accounts.

We’ve been testing these for the last few months across a series of our accounts. We’ve done a mixture of isolated AB tests as well as before and after experiments.

Here’s what we’ve learnt so far this year, and what we’re going to be doing with it.

Flexible ad units: the promise vs the reality

The beginning of last year, we won an early-stage client who had one singular flexible ad that was working. It contained 10 separate, distinctly different ads within it.

One of the first moves we made was to move away from the flexible ad unit.

Our methodology is strongly rooted in creative testing, learning and iteration. We are intentional about creative tests, approach them strategically, and use every week’s experiment to inform follow-ons.

So for us, flexible ad units remove information that allows us to learn.

With that client, we were never able to find product-channel fit for the client. We eventually re-tested the flexible ad unit in case there was some magic in the delivery of it itself, but it never rebounded. For a long time we were confident in our decision.

Then late last year (2025), I saw rumblings on X about the unit. Some media buyers were claiming strong performance from these units over and above typical setups. The 99th percentile Consolidationists would argue that the more diversity you can fit into individual units the better. And lots of belated-Andromeda hype all pointed to the valuelessness of variants within individual units.

Throw into the mix the new creative testing tool, and it felt like it was time to revisit.

We started running a series of tests. Some before/after, and then a couple of stricter tests.

Flexible ad units: A/B test results

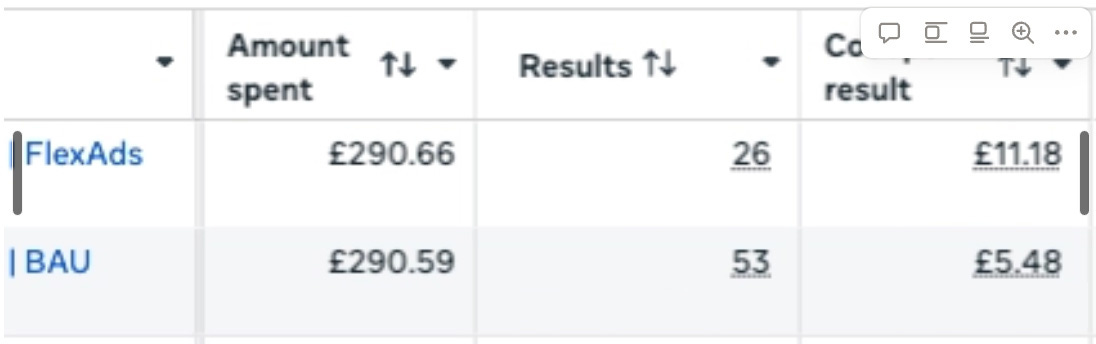

We ran two isolated AB tests using Meta’s testing tool. In each example, we ran the same setup.

BAU contained 3-5 variants of an ad

Flex ads contained a single ad unit with each of those variants within it

We ran this for a week.

The outcome in both tests was stark: the flexible ad unit variant ran at double the CPA of our BAU.

This was an annoying one for us. Had the flex unit won, this would have hugely increased our capacity for ad volume in a consolidated way.

As it stands, we have two very similar test results. With anecdotal before and after results showing similar things.

Summary: we’re derprioritising flexible ad units

Meta’s creative testing tool: good for the wrong reasons

The other tool, which we first ran tests on in October 2025, is different.

The concept here is that you can run creative testing inside your main hero campaign and ad set, rather than inside a separate setup.

Most accounts these days are running some form of setup that encompasses: (1) dedicated testing, (2) scale/bau/hero setup. We are the same.

This new tool means being able to test inside those heroes without disrupting performance.

You force spend into them, and at the end of the tests, they can then scale.

The logic here is good: you get benefit of not having to disrupt something that’s working, and they build data in the place where they will live.

These have limits. You’re capped at five launches per week, which given we like to variant test still, means that we’re limited by what we can launch.

We’ve been testing this now in a series of accounts. Here’s what we’ve found.

Test-week performance is misleading

During the test week, almost every concept looks like a loser. CPAs during the learning phase were consistently 20-60% higher than the business-as-usual benchmark.

Depending on client stage, we expect to see a 20-40% win rate defined as concepts producing CPAs that are the same or better than the account average.

In these tests, no launches saw winning ads. Now an individual week doesn’t prove anything, nor does a single client experience. But we have now looked at this across a series of accounts, as well as across multiple weeks. Repeatedly we see the same things, win rate is about 6% rather than the usual 20-40%.

Note: we’ve not run explicit AB tests here where we ran usual creative testing structure alongside this setup. Given complexity and cost of isolating that test with audiences, we opted against it in favour of faster learning.

If you treated these on the test-week alone, we’d have binned all the ads.

But we decided to keep ads live to see what would happen afterwards, and this is where it got really interesting.

Post-test-week performance improved almost across the board.

One ad that had 60% higher CPA during test week graduated and had a CPA 40% below the account average.

Not only was the test week not a good indicator for CPA, nor did it indicate what would happen with scale.

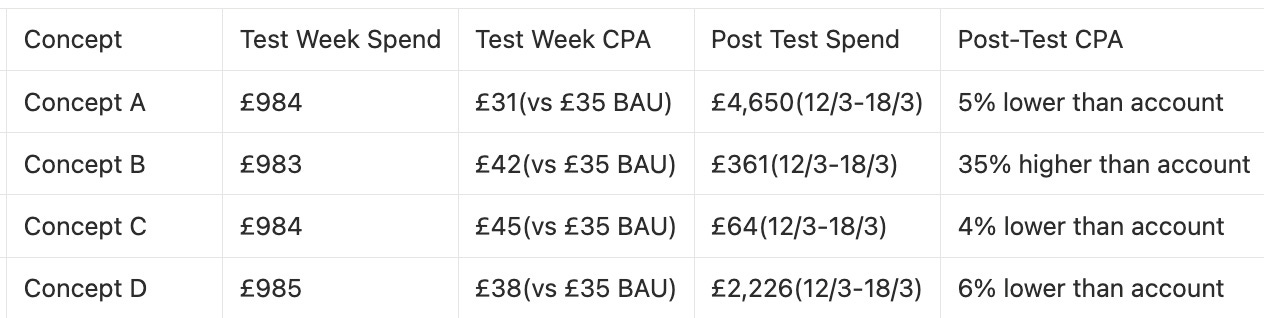

Here was activity from one campaign we saw.

Note: numbers have been anonymised but % differences remain the same.

During test week, Concept A was one of the rare concepts that beat the test setup.

B,C, and D were all higher and what we would have deemed fails.

And yet in the post-test period, we saw:

Concept A scale with better CPA than the account, as we would hope for such a definitive winner.

Concept D, previously a marginal loser, scaled to about half of Concept A’s growth, but with an improving CPA.

Concepts B and C both remained fails, and were ads we ended up turning off.

In other account tests, we saw more results like concept D: those with CPAs that were higher than account CPAs, which in usual structures we’d deem losers, becoming winners afterwards.

Creative testing tool is great for forcing spend

Our takeaway when we’ve round up internally is that the creative testing tool isn’t an effective way to test creative, but it is an effective way to force spend and give ads a head start in a hero campaign.

And so there are instances now where we’re looking to use this setup with this in mind.

A few examples:

Where overall account spend is low and running isolated tests is expensive

Accounts with high CPA where we use less correlative higher funnel events for testing, as a means of getting spend into a conversion-asset

Instances where certain ad sets have high spending, low performing ads that just can’t be shaken

The testing tool itself still helps identify rocketships – they seem to win regardless – but the lions share of winners don’t get picked up.

Meta update: March 2026 – push delivery to an ad

As I write this (Friday 27th), Meta has this week rolled out a new feature allowing you to force spend to individual ads inside the hero.

Our current state with creative testing tool is essentially a way to force spend into a hero campaign to new assets before we see how they truly behave.

With this new launch in mind, it’s likely we’ll use the creative testing tool less and instead move to this new feature.

We are rolling out tests across the agency over the next two weeks and will be testing a variety of setups. Key things we want to understand:

How much spend to force in

Whether ads should be tested first

Does this differ depending on creative testing method

If you’ve been playing with the new tool, I’d love to understand your setups.

Leave a comment as I’d love to hear how you’ve been finding these features.

Josh Lachkovic is the founder of Ballpoint, a creative growth agency for DTC and ecommerce brands. Subscribe to Early Stage Growth for weekly insights on growth, creative strategy, and experimentation.