“Have you ever considered turning Meta off?”

I had a client ask me this recently. They’re a newish client, and we’re pre-product channel fit. I’ve been asked variants of this question for a long time.

While it’s a slightly hackneyed solution (which we’ll get into in this post today), the cause for it as an idea is a good one.

As marketers, only some of our job is the actual doing work of making great creative that converts, it is equally our job to prove its value as well.

Meta can be tricky. Sometimes it over-attributes, other times it under-attributes.

Today, I want to share a few scenarios you might be in, to help identify you true, or “incremental”, CPA.

What you can expect today

Why Meta CPA is rarely what Meta says it is

How to measure true Meta CPA when:

Meta is your first channel and so far your only channel

You have grown your business through various other means, and you are now testing Meta

You have scaled your business effectively with Meta but now know customers come from multiple sources

You are running retention/remarketing activity

Our average adjustments to CPAs

Why Meta CPA is rarely what Meta says it is

Lazy marketers believe that Meta is out to get you.

I don’t buy that. They are of course a business that needs to make money. But there’s an equilibrium where return to advertisers needs to be substantial enough to continue to invest. My experience to date is always that the outcomes and incentives feel aligned for advertisers.

So if it’s not just Meta lying, then what it is it?

Problem #1: Data shifts post iOS14.5

Considering this change happened half a decade ago, it’s odd that this is still a discussion. But for anyone with a memory of advertising in the 2010s, they’ll remember how much more data flowed to Meta. Privacy laws, and proactive changes from Apple mean that’s no longer the case.

The result is less data in platform, showing lower numbers of attributions.

Problem #2: Attribution doesn’t reflect how people buy

The bigger problem is attribution. During the 2010s, we all bought into the lie of attribution. Attribution is a form of measurement that prioritises assigning a value to a channel. Google Analytics does this. Google Ads does it, so does Meta.

Attribution is a flawed measurement protocol.

It doesn’t reflect how people buy. More often than not many purchases these days go:

See an ad → discuss with my wife → she or I purchase later on on a different device

Google likely steals that attributed sale, Meta often doesn’t get credit.

Or even put another way. Let’s say its a long consideration product, I see an ad, I sign up to the newsletter to stay on top of things. Then a few weeks later I decide to buy. Email captures the sale, but Meta caused it.

Attribution is a broken part of the picture full-stop.

For more on this, I recommend reading my startup guide to measurement.

Problem #3: Advertising to existing customers usually spells attribution issues

Problems 1 and 2 affect all marketing, but predominantly acquisition.

Retention marketing – ads spent on your existing customers – face a different problem.

Meta is only modelling behaviour. And with existing customers where there are more drivers of that purchase behaviour, its often less accurate - especially if you’re running viewthrough conversions with an existing audience.

Think of this scenario:

I am a customer and in the morning on the way to work I see a Facebook ad in my feed

Then I get your newsletter which I read and I go and purchase off the back of

Meta quite rightly sees that a conversion happened after a view event and logs it

But even without the viewthrough issue, similar scenarios happen with click-attribution too

Problems summarised

At a high-level we can often assume the following:

Meta under-attributes acquisitions and over-atttributes retention

So what can we do about it

How to measure true Meta CPA

Measuring Meta CPA depends on where you are in your journey and there’s a few key specifics.

For the majority of the time, there can be some form of lightweight modelled behaviour and so most of these won’t cost you anything. That is until your business gets more complex and you need to use more advanced statistical methods.

Here’s how you can measure the true incremental CPA for Meta based on a few scenarios.

Meta is your first channel and so far your only channel

If I was a founder of a new brand today, I would do Shopify and Meta only for as long as humanly possible.

For starters, this means that your buyers don’t have any issue deciding where to buy.

Every decision a customer has to make reduces their chance of purchase. Decision fatigue is real.

So too are distractions. If someone googles you and sees you’re also on Amazon, and heads there, it’s easy to get distracted with other things and forget all about your brand.

And another problem is price. If you’re on sale via third party retailers who cut costs, that eats into your sales. I’ve seen this first hand with a few brands and the brands ultimately closing off a lot of their distribution strategy to prevent the Google Shopping competition.

Simplicity is good. Single sales channels are good. Not only do they convert better, but you also retain all that data. DTC is not just a great way of acquiring customers – you acquire customers who can have way higher LTV because you can get to know them.

But single marketing channels are particularly good.

Why?

Measurement is simple with single channel

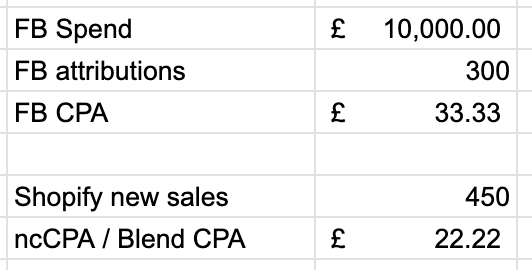

If you’re spending £10k per month on Meta, and you have no other marketing activity, then you know that your blend CPA is effectively your Meta CPA.

We call something like this the “halo multiplier” which is the halo impact of Meta impact / attribution issues. Maybe some of these show up as ‘word of mouth’ or ‘organic’ or ‘direct’ – but at the end of the day, they’re all being driven by your Meta activity.

Your CPA here is £22.22. Keep it simple.

Recommended Meta attribution window: 7day click, 1 day view

You have grown your business through various other means, and you are now testing Meta

Now let’s assume you’ve come in from a different route. You’ve built a marvellous retail business and are stocked in Whole Foods or Ocado.

Things are great. Retail’s likely your background, or maybe you’ve built a great organic brand via SEO in a high intent area. Either way something’s going well, but you now need to think about DTC.

In this example, Meta is likely to go the way of a heavy ‘retention’ campaign and accidentally capture orders that don’t belong to it.

The reason being is that sales are happening truly organically at this point. Whether it’s SEO sales or those created by customers who have seen you in their local Boots or Walgreens, Shopify is going to be registering sales throughout the day.

Now Meta is very good at finding people to serve ads to.

And if you have a Meta pixel on your site, and a user visits it, Meta will almost certainly retarget them. Or in an equally believable world, Meta has good data on who your customers are and is already showing ads to the people who are about to become customers.

Customer purchases – through no influence of the Meta ad – but Meta claims the credit. Or, as with the retention example, maybe the person was browsing around with high intent. Didn’t make it all the way and then the ad nudged them.

Now in these examples, that’s not Meta driving that sale, though it might legitimately, based on your setup, lay claim to it.

Here you need to try to calculate the true CPA by looking at the incremental uplift.

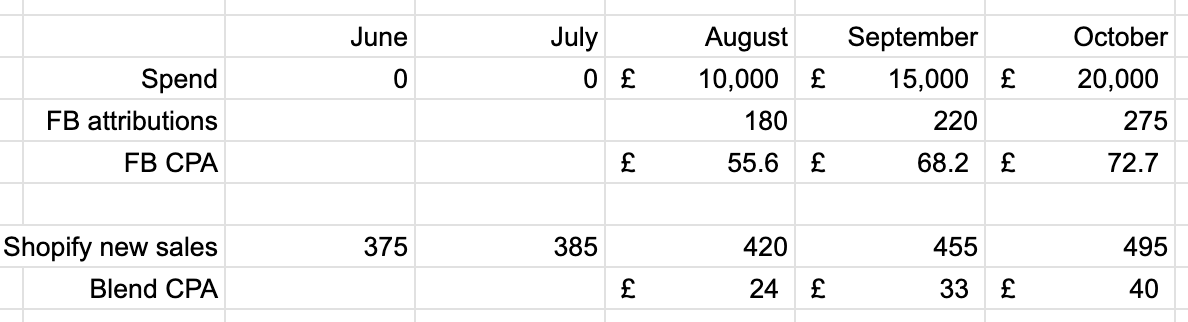

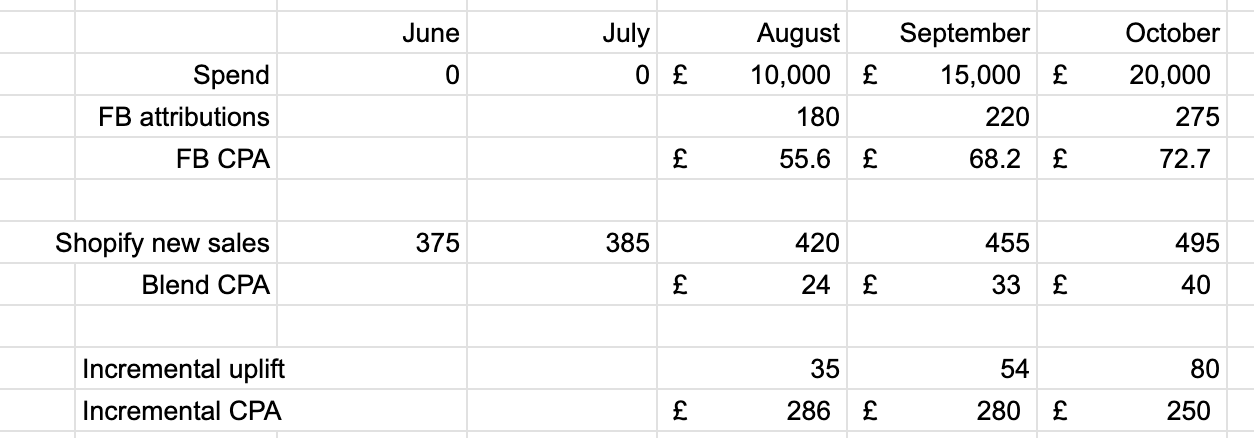

Imagine this scenario:

Now in this case, looking at your blend CPA doesn’t help you.

You may find that a £24 CPA is great and so you start scaling, only to see it shoot up the month after, and again the month after.

Here we might try to calculate the incremental CPA using a ‘before and after’ test.

Why before and after tests are highly flawed

In the intro I said that turning your ads off entirely was a hackneyed solution. It is. And for the same reason as measuring incremental CPA as I’m about to describe.

Before and after tests assume everything else stays the same.

We manage 20 account across £20m in ad spend, and I can tell you that there is so much variance day-to-day, and week-to-week, that before and after tests are at best a single datapoint. Any change to product or website has a dramatic effect. The news has a dramatic effect. In short, it’s impossible to use a before and after test to truly predict what would have happened anyway.

But, it is a useful as a single datapoint in a period of discovery.

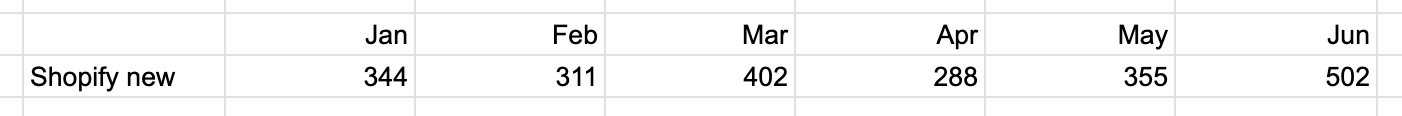

Let’s go back to our example. And now use our no spend months as a proxy for what would have happened anyway.

We measure the ‘incremental uplift’ as the difference between what might have happened and what did happen.

This gives you the incremental CPA.

As you can see this changes the picture dramatically. In fact the FB campaigns got better every month here, even though blend and FB CPAs got worse.

Now this rarely works cleanly, because more often than not you have months that look like this:

And as a result, it’s very hard to determine what a good ‘baseline’ is.

But as I say it’s a useful data point.

In times like this, your best bet is to switch up attribution.

Recommended Meta attribution window: Incremental attribution

You have scaled your business effectively with Meta but now know customers come from multiple sources

Let’s say you went DTC first, or you’ve grown DTC substantially.

You’re now spending £100k/month+ across Meta and Google, and your physical retail popups are driving lots of sales in-store as well as online based on your post-purchase checkout survey.

Measurement now steps up a gear in complexity.

At this point, there’s two new tools that come your way for measurement.

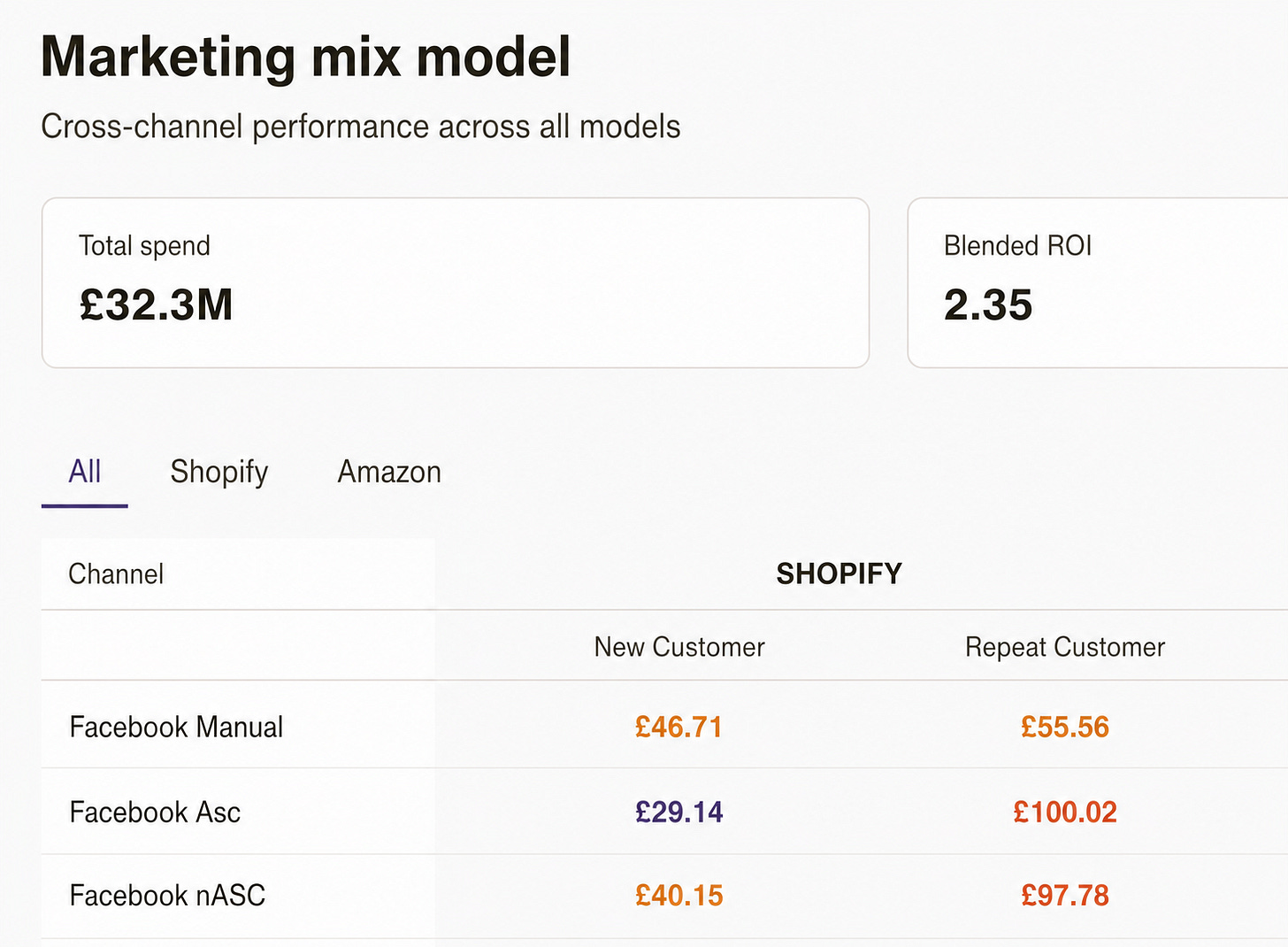

Measurement tool #1: the marketing mix model (MMM)

Modern marketing mix models use machine learning, though the earliest ones predate ML practices.

In their simplest form they evaluate input metrics like channel spend and impressions, and output ones such as sales and revenue.

The model then attempts to determine the impact of a channel.

The cost of an MMM is usually high as you’ll need to find a data scientist to build one, or buy from one of the existing providers – all of whom are relatively expensive.

The downside to MMM is its a historic view, and usually requires weeks or months of data to examine at once. It’s not a daily, reactive view in most forms of the measurement.

Sidebar: we’re looking to launch a self-serve MMM for ~£399/month soon, register interest in comments or email if you want to be first to join

MMMs produce lots of output but at their simplest, they too can produce a simple CPA chart like we’re used to seeing. This comes from our internal tooling, data anonymised.

Measurement tool #2: a ‘lift’ or ‘holdout’ or ‘incrementality’ test.

MMMs are correlative, rather than causal. The only true causal measurement technique is the lift test.

Whatever you call this technique, it’s where you run a hold out.

It’s similar to what we did with our before and after test in example 2 above, but statistically rigorous.

You split your audience in half:

Half get served ads

The other half don’t

You look at the difference between the two.

These tests are expensive to run. They require running hold out audiences which minimises your advertising’s impact. They require making very few changes to setup, so if you’re having a bad month, you need to stick it out.

And they’re costly in other ways.

But they will give you the best outcome of answering:

“what is the true meta CPA?”

Recommended Meta attribution window: no set path, test multiple with your chosen measurement tools of choice.

You are running retention/remarketing activity

Finally, the question of retention activity.

Retention marketing can both be highly cost effective, and a total waste of money. We have clients at both ends of the spectrum, and it really needs to be tested with your own data.

We highlighted above why this problem can happen.

Think of this scenario:

I am a customer and in the morning on the way to work I see a Facebook ad in my feed

Then I get your newsletter which I read and I go and purchase off the back of

Meta quite rightly sees that a conversion happened after a view event and logs it

But even without the viewthrough issue, similar scenarios happen with click-attribution too

How to solve for it

Once you get to a reasonably high number of customers, this actually becomes one of the easiest places to get a real statistical read.

You test this with a holdout / lift / incrementality test, as you would on prospecting activity in example 3 above.

However, you don’t need to invest thousands in this.

Our approach is:

Split your list in half and assign everyone an even split of cohorts

Upload and sync one of those lists to Meta

Expose ads to that audience for 4-8 weeks depending on list size and conversion rate

Measure the incremental uplift of the two.

I wrote about this in depth a while ago: you’re measuring retention all wrong – how to measure retention marketing.

We’ve run six of these tests over the last half year.

2 clients found it to be highly incremental and cost effective

1 client fund it to be incremental but not at a cost effective CPA

The other 3 saw no impact of the activity

Recommended Meta attribution window: again it’s something to test, but in general, we’re more likely to recommend incremental attribution → click-only → click + viewthrough

Our average adjustments to CPAs

Measurement is in some ways a luxury. In the early days its often left behind, and then investor pressure to hit this quarters’ goals usually stops it from becoming a regular occurence.

And so we have our own proxies that we often use as a standard baseline. These are the universal numbers which we recommend when fresh into an account.

Acquisition we always expect Meta to deliver about 10-25% more value than it says it does.

Retention we expect it to deliver 50% less value than it says it does (esp on 7dc1dv)

It’s really important to find out these baselines and proxies for yourself. Some of the swings are fairly substantial and so in a world where a 5% difference can be make or break, knowing for sure is important.

I hope you’ve enjoyed this. Measurement’s one of those subjects that can often get really complicated, but it needn’t be.

We’re experimenting heavily with ways of helping clients with measurement at the moment. If you’ve got questions and you don’t know your true Meta CPA, then get in touch. If not, please leave a comment below. Thanks.

I’m building Ballpoint: the AI-native growth agency I always wanted to hire when I was a DTC founder and before that a head of growth. We have scale brands from £1m → £50m through digital advertising. If you’re looking for support, then you can email me on josh@weareballpoint.com.

In the meantime, if you enjoyed this please consider giving it a like, a comment or forwarding it to a friend.